[Note to self: add this to the Quantitative Philosophy Index when it posts]

My personal life philosophy defines an individual’s value on the activities one engages in when in an autonomous state. More simply: what you do with your personal time quantifies your life’s meaningfulness. I don’t see the level of impact itself to be the defining factor, since so few of us are ever granted the circumstances under which to achieve greatness, but that doesn’t preclude us from seeking a virtuous live, even if the tangible results are comparatively minor.

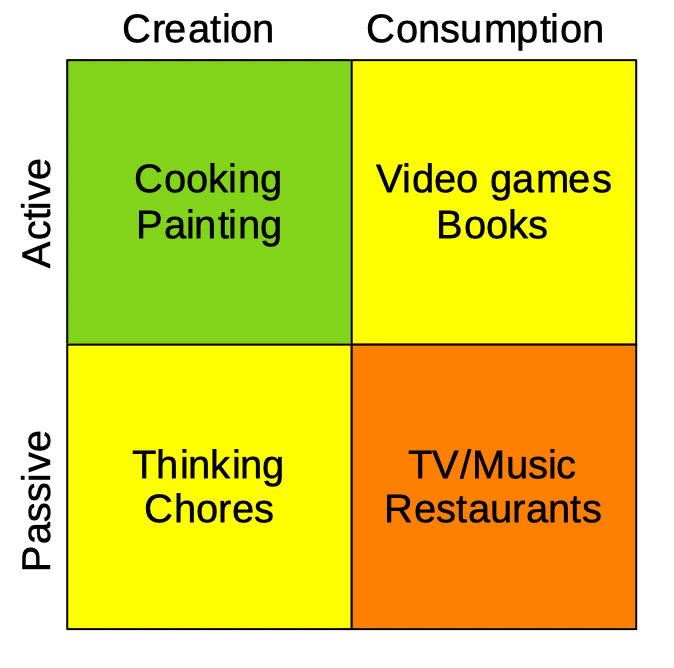

Setting this premise: after a day of my daughter watching anime and binge-eating, I tried to explain that she was, in some non-so-friendly-terms, being a completely self-indulgent and useless sack of loafing teenage flesh. In the aftermath of that conversation, however, I though it more helpful to create some definitions. Here’s how I break them down:

All voluntary human activities fall into one of four categories:

- Active Creation (Cra): activities that require direct engagement and production.

- Active Consumption (Coa): activities that involve using someone else’s creation, but still require direct engagement.

- Passive Creation (Crp): activities that are either a secondary component of active creation, or prerequisites/maintenance activities to support active creation.

- Passive Consumption (Cop): activities that involve using someone else’s creation in a manner that is strictly self-indulgent.

These activities are not equal in value. Cra is the highest, with Coa and Crp secondary, and with Cop the least.

As an example, washing dishes and doing some reading rank above watching TV all day, but rank below cooking dinner. Coa and Crp ultimately support Cra – without which Cra couldn’t take place, while Cop remains generally nonconstructive outside some mental health benefits. Obviously these baselines require some interpretation. I’d consider reading a classic novel to be Coa but reading a trashy romance novel Cop – one must be honest with themselves.

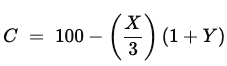

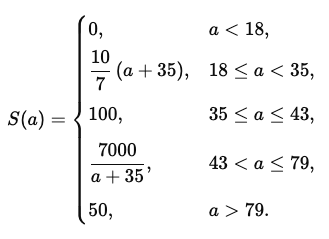

This is all fine for abstraction, but let’s quantify. What constitutes a day seized? At what point does one achieve virtue for the day? I’ll assign values:

Cra = 5

Coa = 3

Crp = 3

Cop = 1

This almost works with a Fibonacci sequence. Indeed, Coa and Crp could probably have tier 2 and 3 pointed subsections, but I’ll keep it simpler for the sake of this exercise.

Virtue = Cra + Coa + Crp + Cop

Day’s value = amount of daily virtue.

As for a daily virtue benchmark, here are the highlights from a recent Saturday, which I feel was a notable example of one such virtuous day. I…

Made pizza, made my own cheesey bread, cleaned the kitchen x3, cleaned out the fireplace, started a fire, watched Fallout, took measurements and material inventory for needed house projects.

I’m sure there were more, but these are what I remember. This would come out to:

5+5+3+3+3+3+3+1+3 = 29

It was a busy day, so lets round down to 25 to be more realistic with goals. A virtuous day requires 25 points. For a day off. As for a working day, let’s say 12 – half rounded down.

Now math:

Cra=5,Coa=3,Crp=3,Cop=1

S:=Cra+Coa+Crp+Cop

O = day off

V = day is virtuous

V⟺(O∧S≥25)∨(¬O∧S≥12)

Not having a philosophy background, the concepts of virtue and excellence seem to escape the kid’s comprehension. Maybe this could add context. If not, it’s a good overview and reminder to myself for when I start to feel lazy, now that I’ve thought the concept through. Virtue is universally available. All we have to do is act towards it.

–Simon